The advent of artificial intelligence (AI) has brought significant advancements in many sectors, yet it has also posed severe threats, particularly in terms of child safety and exploitation. In a bold legislative initiative, the UK government has vowed to outlaw AI tools that create child sexual abuse material (CSAM), moving in a direction that reflects the urgent need for comprehensive measures to counteract the alarming rise of AI-enabled child exploitation. This article delves into the implications of these measures, the chilling reality of AI-generated abuse, and the wider challenge of protecting children in an increasingly digital world.

The UK government’s announcement marks a significant step toward eradicating tools that facilitate child exploitation. This “world-leading” legislation seeks to make it illegal to possess, create, or distribute AI tools specifically designed to generate CSAM, with potential prison sentences of up to five years for violators. It also aims to criminalize the possession of materials that provide guidance on how to use these AI tools for malicious purposes, with the maximum sentence set at three years.

These new laws emerge after mounting concerns surrounding the rapid proliferation of AI-generated imagery, which is reported to have a disturbing level of realism. Existing laws prohibited the mere possession of AI-generated CSAM; however, this legislation goes further by targeting the underlying technologies that enable such horrific acts. This shift emphasizes a more proactive approach to combating child exploitation rather than merely reacting to its consequences.

British officials, including Jess Phillips, the safeguarding minister, have underscored the need for a global response to a problem that transcends national borders. As technological capabilities evolve, so too do the strategies of predators who exploit them. The reality is harsh: AI tools have become a significant threat in the grooming and abuse of children, underscoring the necessity for international cooperation and standardization in legislative measures.

The Home Office has indicated that these AI tools can manipulate existing images in frightening ways, such as “nudeifying” real photographs or superimposing child faces onto inappropriate backgrounds. This manipulation has led to the emergence of deeply distressing scenarios for real children who fear their images will be misused. The role of organizations like NSPCC is crucial in supporting affected children, as their Childline service has reported distressing calls from victims grappling with the ramifications of AI-generated images.

The recent legislative announcements also aim to introduce a new offense for individuals managing websites where predators share CSAM or instructions for grooming. This proposal seeks longer sentences of up to ten years for online predators, tightening the grip on those who contribute to this nefarious online subculture. Although existing laws hold similar prohibitions, the new laws are designed to afford the judicial system greater leverage in punishing offenders and providing a clearer framework for accountability.

Additionally, the UK Border Force has been empowered to conduct inspections of digital devices belonging to individuals suspected of posing sexual risks to children. This proactive measure highlights the government’s intent to stay ahead of the evolving predatory tactics that utilize technology to exploit vulnerabilities.

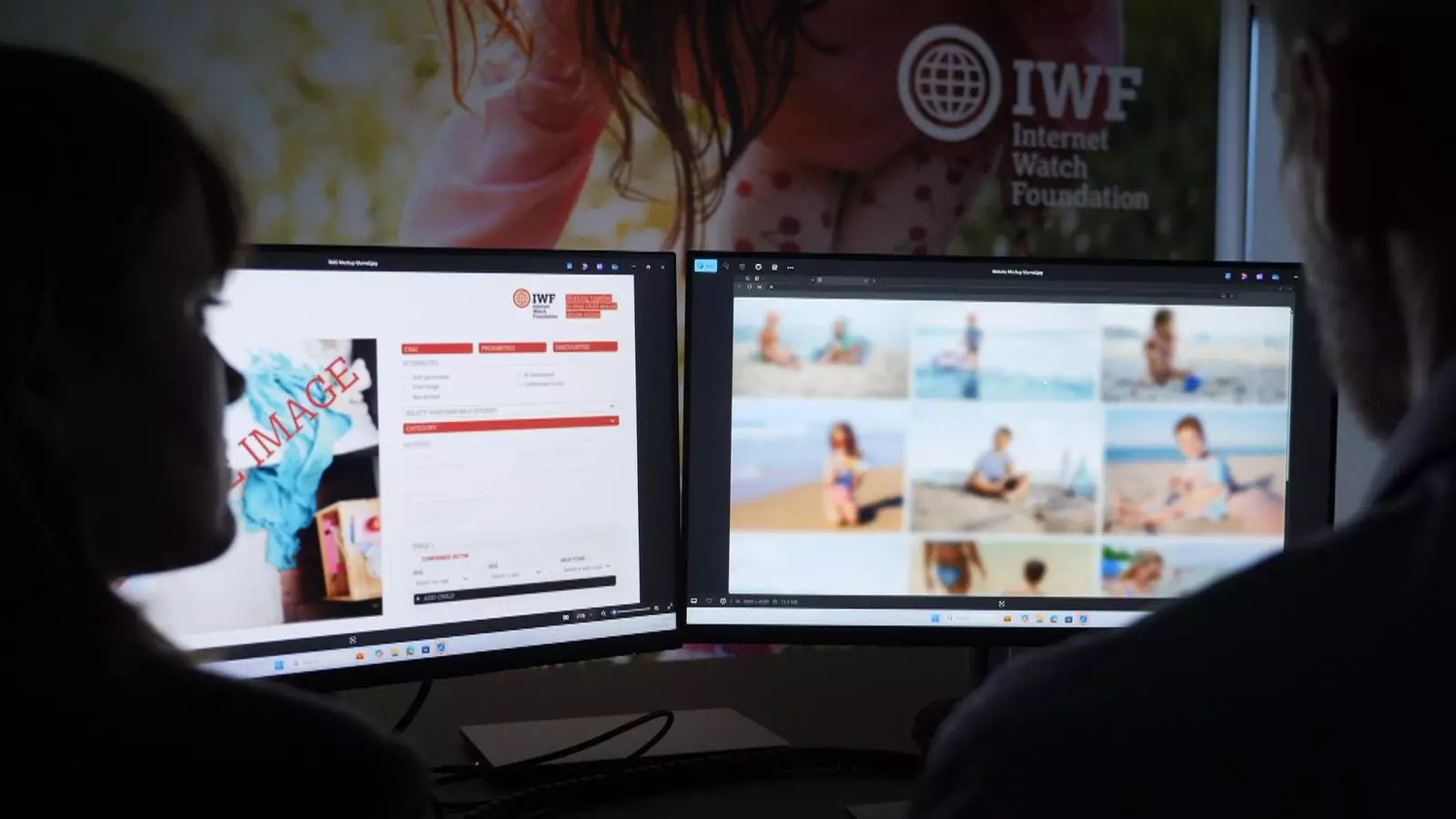

Recent statistics from The Internet Watch Foundation (IWF) starkly illustrate the severity of the issue. A notable 3,512 instances of AI-generated CSAM images were identified within a single dark web site over just a 30-day period in 2024, reflecting a staggering increase of 10% in the prevalence of extremely graphic category A images. These numbers form a sobering backdrop for the urgent need for legal and societal reinforcements against child exploitation.

Derek Ray-Hill, the interim chief executive of IWF, expressed a sigh of relief that the government’s plan aligns with their longstanding call for upgraded legal frameworks to tackle the rapidly evolving threats posed by AI technology. As the public and private sectors unite in their efforts to combat child exploitation, it is crucial to recognize both the gravity of the situation and the responsiveness of legislation to address it.

These legislative measures represent a critical first step, but combating AI-generated exploitation is a complex challenge that requires ongoing diligence, education, and cooperation among stakeholders. The fight against child abuse in this new technological frontier is far from over. Moving forward, it will be essential for governments, law enforcement, NGOs, and communities to collaborate to create a safer online environment for children. Proactive legislative frameworks must be in place to adapt to new technologies and tactics, ensuring that the harrowing specter of child exploitation is continuously addressed and ultimately diminished.

Leave a Reply